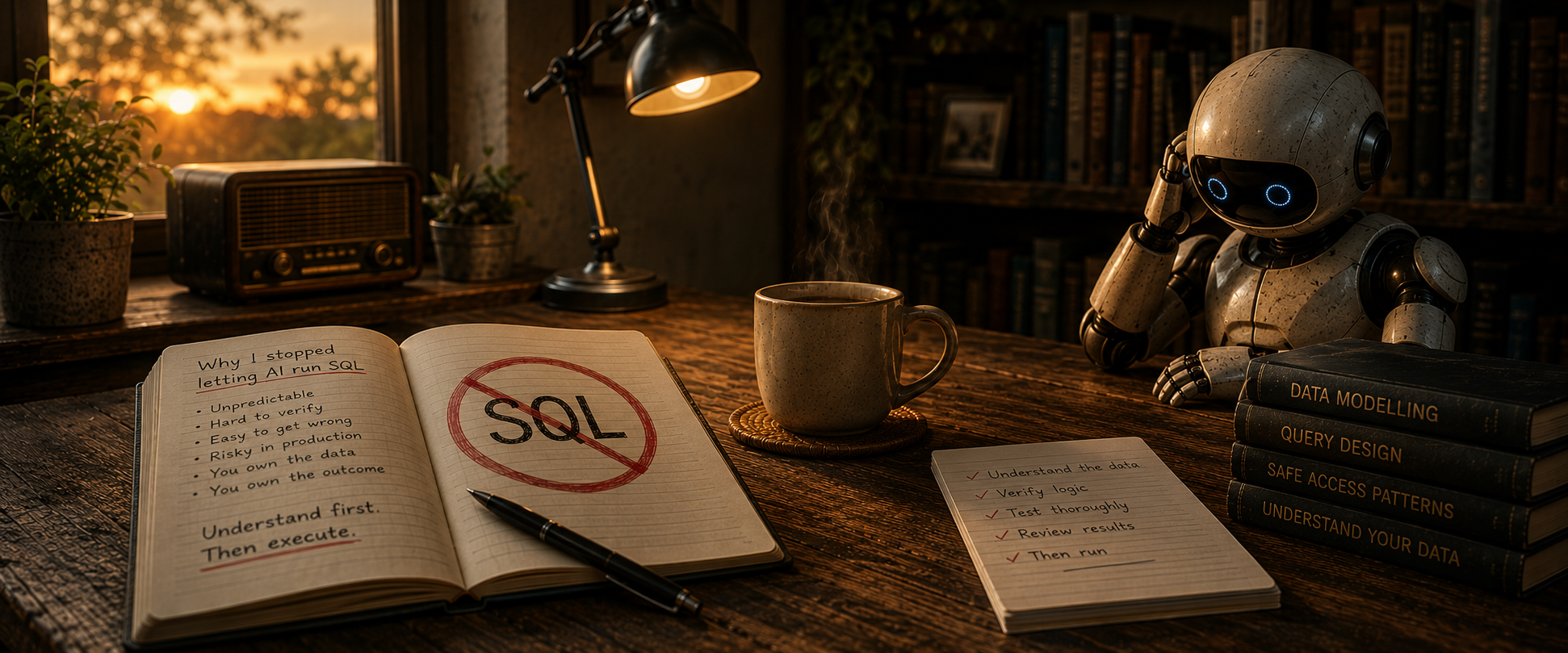

Why I Stopped Letting AI Run SQL

AI can write SQL for you and run it immediately. That is useful, but it also changes the risk boundary around database access.

AI can write SQL for you and run it immediately. That’s the problem.

Much of the recent excitement around AI and databases has focused on MCP (Model Context Protocol) — tools that let you “talk to your data” using natural language. The LLM translates your request into SQL and executes it on the spot.

For exploration and quick insights, that’s genuinely useful. But for serious development or DBA work, it quickly falls apart — because the issue isn’t capability, it’s control.

When the AI handles both generation and execution:

- SQL often isn’t saved anywhere

- There’s little or no audit trail unless you build one yourself

- It’s hard to repeat or refine previous steps

- You lose track of what’s already been run

Convenient? Yes. Reproducible? No. And for professional database work, that’s a dealbreaker.

So I’ve gone back to something more traditional: SQL scripts in files, version-controlled in Git — with AI helping to write them, not run them blindly.

The Workflow: AI + SQL Scripts + SQLcl

My current approach is straightforward:

- Use an AI coding tool (Codex, Claude Code, or similar) to generate SQL scripts

- Save those scripts as files

- Review them

- Run them using SQLcl

AI accelerates the writing. I keep the control.

Why SQLcl? And Here’s the Setup I Actually Use

SQLcl is far more than a SQL runner — it’s a full command-line interface with scripting, formatting, and automation features built in. But the feature that makes it click with AI-driven workflows is the connection manager (connmgr).

Connection handling is one of the biggest friction points when automating Oracle database work — wallets, service names, credentials. SQLcl lets you wrap all of that behind a simple saved name.

Set up your wallet (if using Autonomous Database):

sql /nolog

SQL> set cloudconfig /your/wallet/location/wallet.zip

Save a connection:

SQL> connect -save mydb -savepwd admin/password@myservice

This connects, and if successful, stores the full connection details including wallet config.

Use it from the command line:

sql -name mydb

Or switch connections within a session:

SQL> connect -name mydb

Once this is set up, your AI tool doesn’t need to know anything about wallets or credentials. You simply tell it:

“Generate a SQL script to create the required indexes, save it as create_indexes.sql, and run it using: sql -name mydb @create_indexes.sql”

The SQL is visible, reviewable, committed to Git, and the execution is explicit. Every time.

A note on -savepwd: This flag stores your password alongside the connection. If you later need to update the credentials, you might expect to just re-run the same connect -save command — but that won’t work. To replace an existing saved connection, you need the -replace (or -rp) flag:

SQL> connect -save mydb -replace -savepwd admin/newpassword@myservice

This tripped me up at first. The -rp flag can only update an existing connection — it won’t create a new one. So the sequence is always: create with -save, update with -save -rp.

A Real Reason to Care About Safety

I’ll spare you the usual “always review AI-generated SQL” advice — you already know that. But here’s what’s easy to underestimate: AI-generated SQL can be syntactically perfect and logically wrong. It can create an index on the wrong columns, use a join condition that silently filters out rows, or generate a MERGE that overwrites data you didn’t intend to touch.

When MCP runs that SQL the moment it’s generated, you don’t get a chance to catch those problems. When it’s sitting in a file waiting for you to run it, you do.

That’s the whole point.

Final Thought

MCP-style tools have a place — they’re excellent for ad-hoc exploration and quick questions about your data. But for development and DBA work that needs to be repeatable, auditable, and safe, they aren’t enough.

The pattern that works: let AI generate, let scripts capture, let SQLcl execute, and keep yourself in the loop at every step.

Assistant, not operator. That’s how AI should work with your database.

What’s Next

This workflow is deliberately simple — and that’s the point. But there’s a lot more you can build on top of it. I’m working on a more complete SQL runner framework that adds structure around script ordering, environment targeting, error handling, and execution logging. I’ll share that in an upcoming post.