Introduction

Discussion of data models in Bigdata environments usually centres around technology focused question – file formats, what compression to use, which columnar store is preferred, whether the file structures are splittable and so on. While these are valid and important questions, there are more fundamental questions to be asked on the higher level data structures that should be built using those various technologies.

In this article I will compare three methods of optimising raw data in schema-on-read, read-only systems.

Challenges

But, surely, there’s nothing to decide? We simply take the raw format of data delivered to the data pool/reservoir/lake (choose your preferred aquatic synonym) and drop it in without transformation. That’s what schema-on-read means. Right ? Well, for may types of event data, this is perfectly correct. If you are handling weblogs or financial transactions or mobile calls, then holding the untransformed data is acceptable. There are technology choices to be made for sure (Avro, Parquet et al), some consolidation into large files is preferable and data partitioning is often a good idea, but in none of these are we significantly changing the 1:1 relationship between the raw input and the storage model. (There is certainly a discussion to be had on holding additional aggregations and other derived data sets, but that’s a discussion for another time).

‘Master data’ on the other hand, definitely poses some real challenges. So let’s just outline the issues here.

Event data which (generally) represents a one-off event. There are no changes of that event to be recorded over time. The event happens, the data is collected and recorded. Analysis can then be applied to the events ‘en-masse’. Master data, on the other hand, usually represents a real-world entity that changes over time. The entity is long-lived – be it a Customer or an Account or even a Financial Trade – it will have a life-history to which the events (as above) effect a change. We are for sure interested in those changes over time, but we are also interested in the entity at a point in time. So there are two fundamental views that we need to be able to support – a record of all the changes over time; and also a ‘snapshot’ of the entity at a specific point in time. Most often that point in time will be the ‘current’ view, but for deeper analysis it is fairly common that a previous point in time view will be needed – e.g. to understand a Customer a year ago such that we can put the customer’s decisions (events) into context. With these two views in mind let’s look at the alternative ways in which we can try to hold this data.

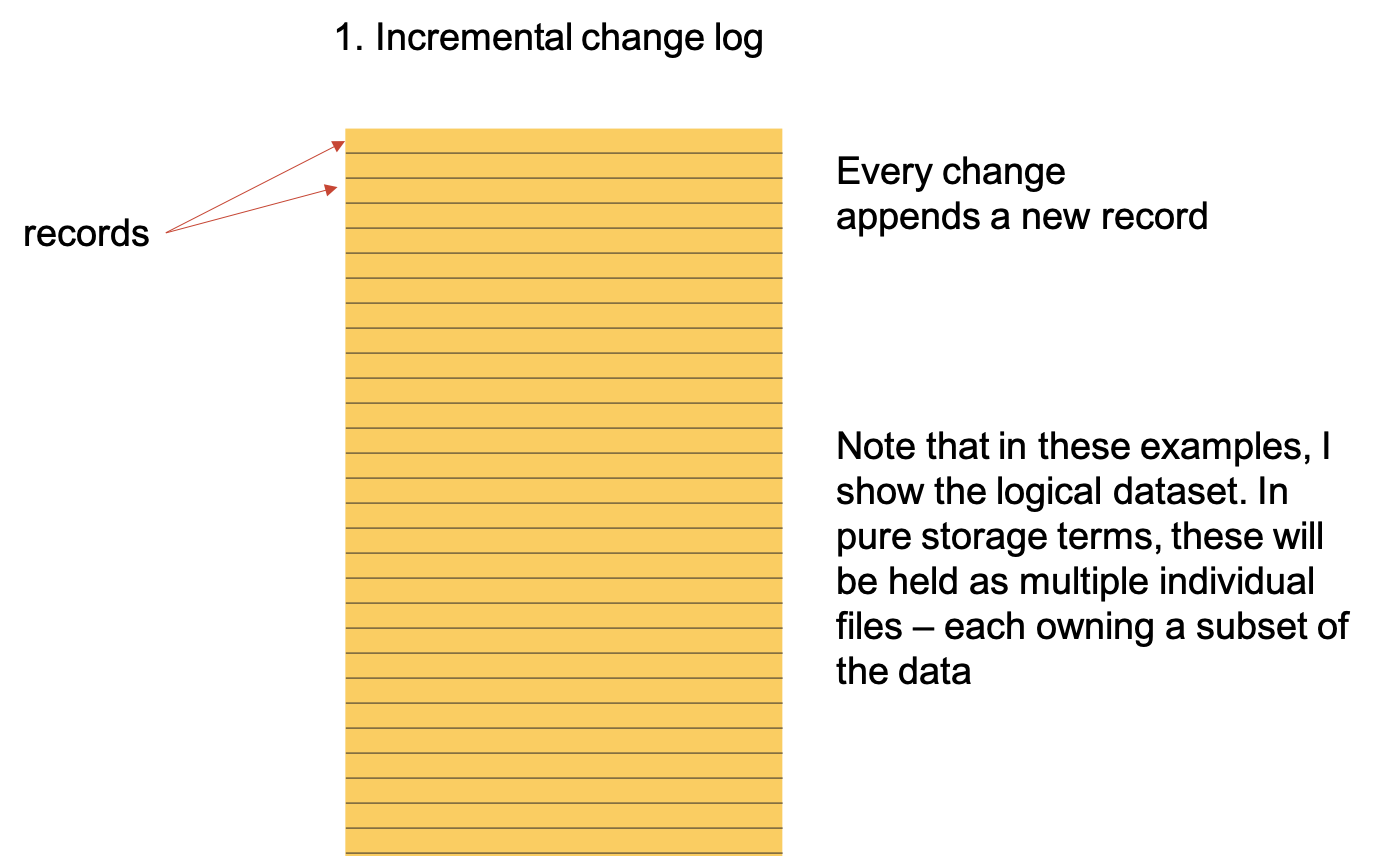

1. Incremental change log

For every change that is made to the entity, a new version (of the record) is created and added to our data lake. No other structures are created.

This is the simplest and for a some situations may be sufficient. However, this has three significant limitations.

The first issue is concerned with how we build our ‘current view’. As suggested above, this is the most often requested view so its ease of use and its efficiency are important. To build the current view, we will need to access the full change log and ‘choose’ the most recent version for every row for the entity. An example here might be an order entity with multiple lines on the order – changes to individual lines may appear at any point in the lifecycle of the parent order.

If we are only recording changes when they happen then the most recent version may be today or yesterday or three years ago. So what should be the simplest type of query becomes a large scale data operation – in fact, it is likely that significant sorting of the entire dataset will be required to find the most recent version.

When changes are infrequent then this may not be too onerous, but if changes are frequent, then the data volumes to be sorted can become orders of magnitude greater than the ‘current view’ that is needed. As sorting is a O log(n) function, then this ends up with a ‘current view’ for which the performance will degrade over time – not as a factor of the ‘current view’ but simply as a factor of the data retention time period. This challenge also extends to recreating any point-in-time view where the same challenges are experienced.

However, and probably more importantly, there is the question of data access for users. There is no easy method to access the entity in that most common view. Just getting a list of customers now needs a reasonably complex data transformation. Whilst it’s possible in SQL to extract the ‘current-view’, it does require a join to a sub-query which many bigdata tools do not support, and that join in any case necessitates a sort or similar operation.

The final challenge is on the ageing out of data. This is especially important when the data comes under privacy and data retention regulations. It must be possible to remove all the data older than a point in time unless that data represents the current view. For example for a ‘dormant’ financial account, there may not have been any changes for many years, but it still represents the ‘current’ view and so that record must be retained. The consequence of this is that the purging of old data becomes a complex and processing intensive activity. For all data that is older than the retention date, we have to read the data, establish on a row-by-row basis whether that data needs to be retained (i.e. is there a more recent update in the log), and then write back the dataset that includes only those rows that are still required.

In summary, this simple approach is attractive because of its simplicity of writing the data, but it very significantly complicates the way we access even the most simple access requirement of the ‘current view’ and so is not, in isolation, a good approach.

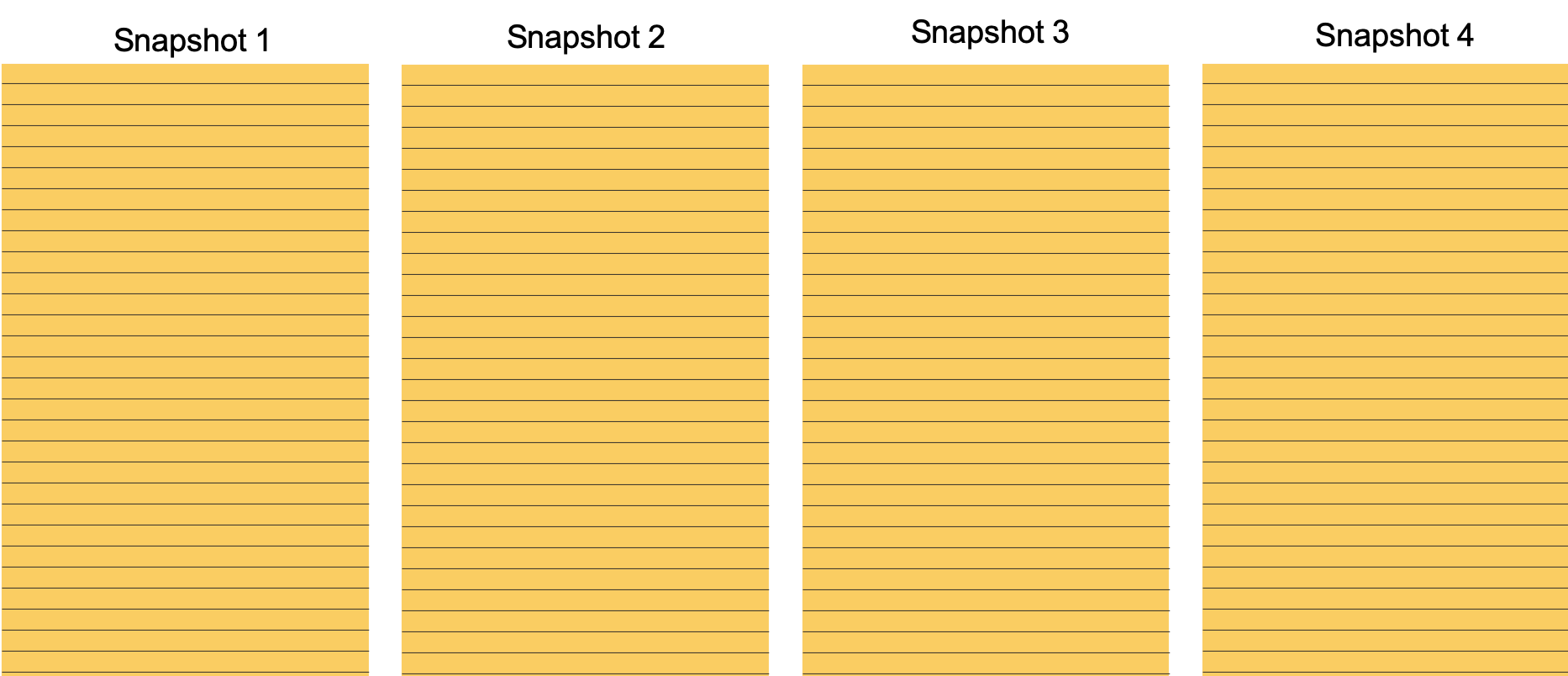

2. Snapshot

A snapshot takes or builds a complete view of an entity at a point in time and saves that view. Each snapshot should represent the full data set. This approach is often used where the master data is sourced from an operation system or from a data warehouse – the data is periodically extracted and dropped into the data lake.

This is a great way to address the point-in-time view in many situations, but unfortunately it also suffers some substantial limitations – obviously the main limitation is the loss of the change history. Because the snapshots are periodic, then any changes which happen during that period are (in effect) aggregated and so the changes cannot be distinguished. There is a real loss of data in this model that cannot be regained. For some types of analysis ( e.g. financial trades, most fraud analysis) every change is noteworthy and so this approach is unacceptable.

The second limitation is the pure data volume that this generates. If a daily snapshot is created, then to keep a two year retention, then the volume of the data set jumps to 730 times the single day size, and this is usually just not acceptable. The may be mitigations to this – for example by not keeping every single snapshot. A typical pattern would be to keep every daily snapshop for a month, once a week for the next 90 days, once a month for a year, once a quarter for the rest. However, the data becomes less and less useful as its accuracy decreases over time. It becomes impossible to be certain of even the simplest fact – e.g. did a customer have a particular product at a point in time.

Similarly to the change log approach, the snapshot approach also significantly complicates access and query. Selecting the correct snapshot to join to is trivial for a programmer writing a one-off analysis, but making this transparent to non-IT users is fairly complex and again not explicitly supported by most of the bigdata tools.

The final limitation is that this is still a snapshot and is, in practice, never up to date. We can never be sure that the information provided is truly accurate, and for the reasons above, we can’t increase the frequency of the snapshot to mitigate. This is becoming more and more important over time as Bigdata solutions are increasingly being used (or perhaps they always were) to support a real-time digital enterprise that requires ever more up-to-date information.

So for these reasons, the snapshot approach also falls short.

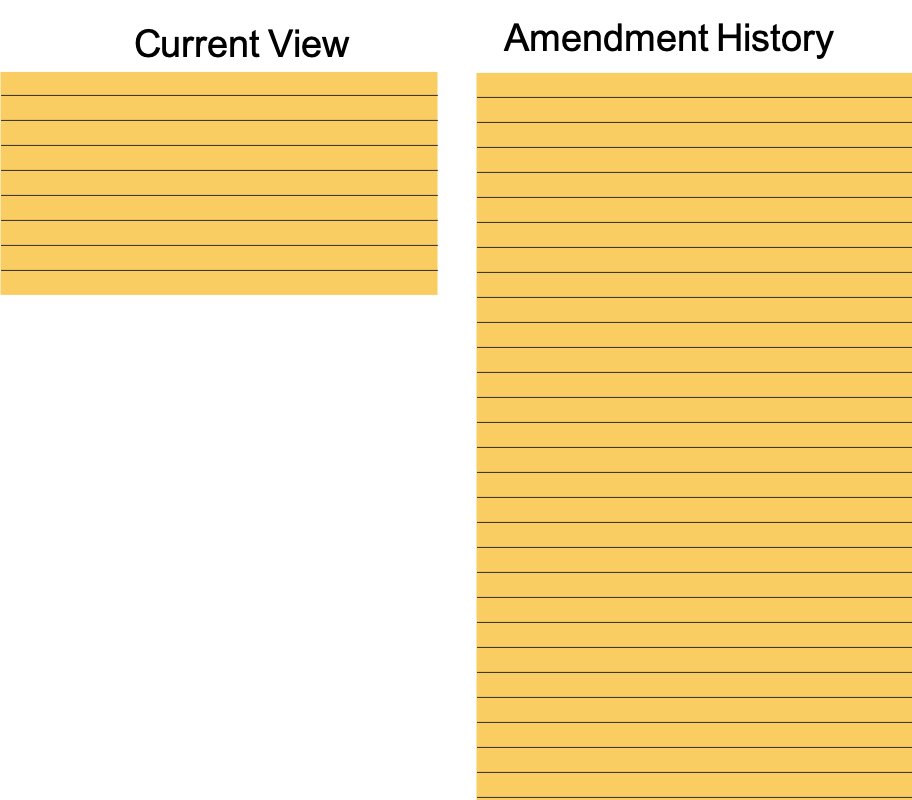

3. Hybrid – Current View + History

Fortunately, there is a model that can address the issues with the first two models without recourse to huge data sets, massive copying of data or losing data accuracy and optimises the most used ‘current’ data set. However, we do have to make a compromise – we have to move to an updatable data-set, not just incremental change record, but true update.

If we introduce a pure current-view model which accepts every change and applies it as it happens to that ‘current-view’ then we immediately have the most up-to-date current view possible. This will address the most used queries (‘current-view’) with a very efficient and minimal dataset. In addition, we must also implement the ‘incremental change log’ from above based on the same complete and granular events. However, now that we have a complete current view, then we have also automatically dealt with the ‘purge’ problem described above. Change records can simply be purged based on their date. If they represent the ‘current view’ for a row then that row will be in the ‘current-view’ so we can purge them from the incremental change record with no (unwanted) loss of data.

Unfortunately, we have added a complexity in that our pure bigdata solutions (those based on HDFS or similar) do not support updatable models and so we do have to introduce additional technology to support the real-time view. However, this is likely to be desirable in any case. A good updatable technology (RDBMS, NoSQL etc) will typically have very low latency and will support orders of magnitude more users than Hadoop family technologies, and so these technologies will most likely be in use already. In fact, to go a little further, that real-time view can become the data structure that supports not only the batch analytical capabilities, but also the real-time requirements driven by an on-line digital platform.

There is an additional enabler that is required of course – how do we run analysis across both technologies – the updatable and the batch – fortunately there are a number of software projects and products already available to address that particular requirement.

Alternatively, in a pure read-only world, the current view can simply be periodically refreshed and replaced. This will need a rewrite to storage of the complete current view for each refresh, so may be a costly operation.

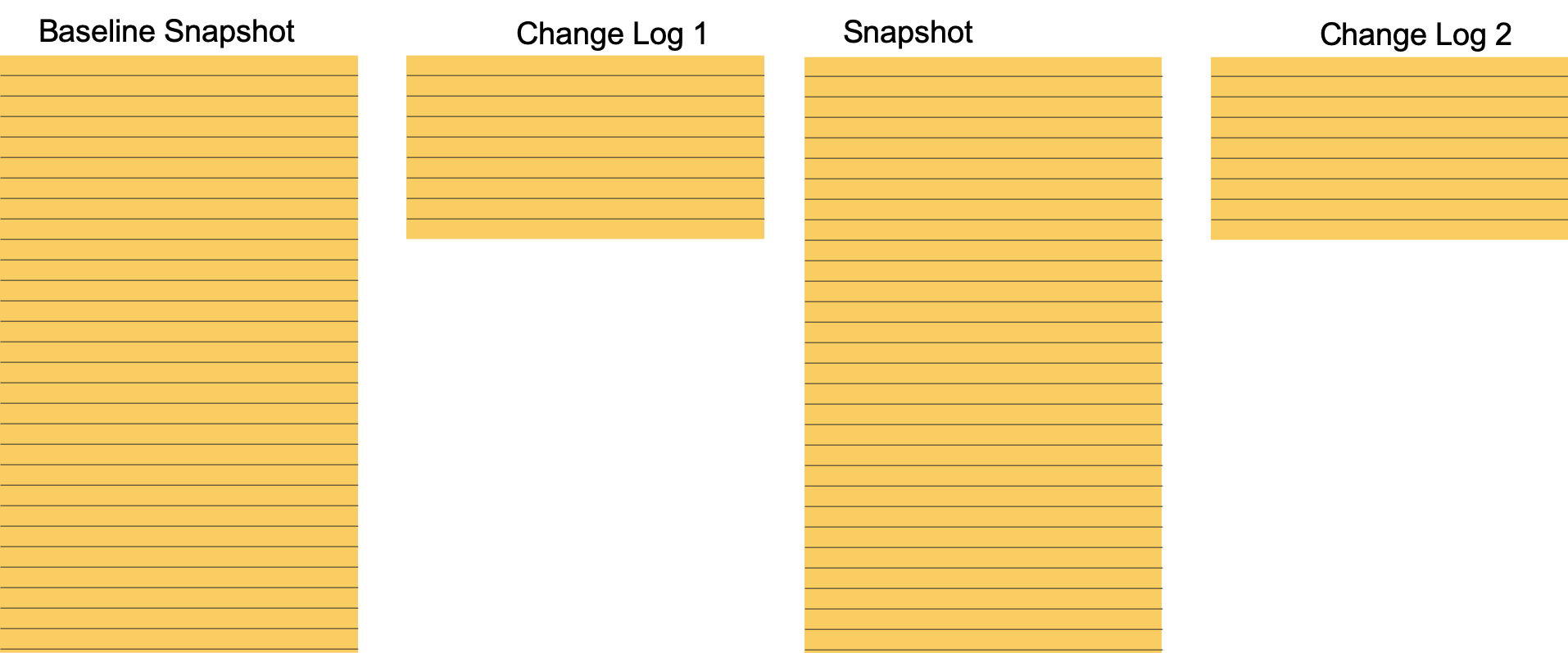

4. Optimised Hybrid

There is still a challenge remaining, however. How do we access the entity view at an arbitrary point in time. i.e. what was the profile of the customer as at 7pm on 23rd February 2014? To address this, we must first assume that there is always a ‘baseline’ snapshot – i.e. a snapshot of the entity which is created on day one of the system. From that baseline, if we apply the changes in our in incremental change log, then we can re-create the entity at any point of time that we desire. There is a significant processing cost to this as described above, but if this query requirement is fairly infrequent, then that is probably price worth paying.

If an arbitrary point-in-time view is a frequent and important capability that is required then we can take some further steps to mitigate those processing costs. The simplest and most obvious is to add periodic read-only snapshots to the model. For example, if we were to add weekly snapshots, then this would mean that the processing required to recreate a full point-in-time view would be a maximum of one week’s changes – i.e. take the snapshot and then apply all the changes for that week up to the required point in time (roll-forward). The snapshots are obviously also available for direct query where full data integrity is not required and the data in the snapshot is ‘good enough’.

Addendum – Handling deletions

Deletions are not a model in themselves, but are an area where additional considerations may be required – especially if using the hybrid model above.

If the model in use includes the real-time updatable model from above, then we have to ask ourselves what happens in the case of the event notification being a deletion. However, firstly we need to be careful of what we mean by deletion as there are many types.

a) logical deletion – where a row has an end-date set

b) true delete – where a row is deleted from a source system

In our system we need to be careful to treat these appropriately. For the first – ‘logical’ deletion – then we could consider this to be just an incremental data change – no special treatment required – but very often the situation is a little more complex. In many cases, (and especially for privacy related data) once a row has been logically marked as as ‘deleted’ then it becomes a candidate for later purging, and it also signifies that no further data changes are to be accepted. (Some troublesome systems insist on the ability to ‘resurrect’ data even after logical deletion, but that really is an outlier and so let’s not complicate more than necessary). There is also a question whether a ‘logically deleted’ row should be included in the ‘current view’ – again in many cases the answer will be negative.

To support this complex treatment of deletions, it may be advantageous to segregate logically deleted rows from the physical ‘current view’ and place them in their own structure. This third structure could be read-only and if partitioned by date allows an easy way of purging those logically deleted rows when their time has come.

For entities where there the entity has large volume, but a relatively short entity life, then adopting this approach leads to a much leaner current view which can lead to significant performance gains.

Summary

While dumping data in a simplistic “raw” form may be an easy option, it is neither efficient in storage terms, nor is it effective in query performance. The added complications of complex deletions means that we need to think very carefully about how we store large data sets.

Leave a comment